Protego Weekly Update: May 29th, 2019

In this week’s Protego weekly update, we focus in on a particular debate that roiled technology policy circles in recent days.

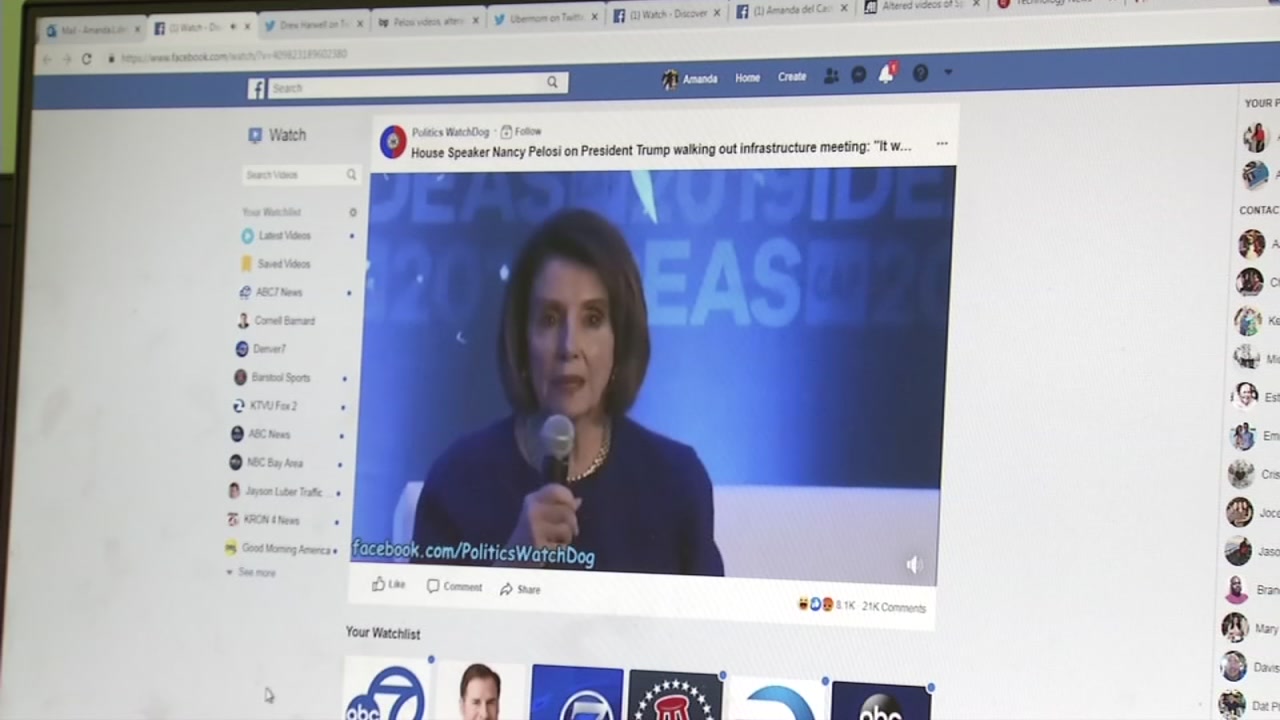

Last week, a video posted to Facebook appeared to show Nancy Pelosi drunkenly slurring her words. It was subsequently posted to YouTube and Twitter, where it was even amplified by former New York mayor and Trump personal lawyer Rudy Giuliani, who later took it down. The video was almost immediately recognized as a fake, but that did not stop it from racking up millions of views.

To most, the response by the social platforms left a lot to be desired. Facebook head of policy Monika Bickert gave an interview to CNN’s Anderson Cooper, defending Facebook decision not to take down the photo and maintaining the platform’s general stance that it does not get into making content decisions except in extreme cases such as hate speech. But the company did take steps to limit its spread and to notify users that the video was doctored. “Anybody who is seeing this video in news feed, anyone who is going to share it to somebody else, anybody who has shared it in the past, they are being alerted that this video is false,” Bickert told CNN.

What to do about such incidents? Columnists, commentators, politicians and tech experts did not hesitate to opine:

- Nancy Pelosi herself came down hard on Facebook, saying its handling of the incident proved why she regarded the platform as “willing enablers” of disinformation. “They’re lying to the public. … I think they have proven — by not taking down something they know is false — that they were willing enablers of the Russian interference in our election.”

- Kara Swisher also slammed Facebook. “The only thing the incident shows is how expert Facebook has become at blurring the lines between simple mistakes and deliberate deception, thereby abrogating its responsibility as the key distributor of news on the planet.”

- New York Times columnist Farhad Manjoo used the incident to reflect on an even bigger disinformation threat- Fox News. At about the same time as the fake video of Pelosi was rocketing across social media, President Trump shared another video- edited to make it seem as if Pelosi was stammering through a speech. That video was produced by Fox News. “While Facebook moved quickly to limit the spread of the doctored Pelosi clip, Fox is neither apologizing for airing its montage nor taking it down, because this sort of manipulated video fits within the network’s ethical bounds.”

At Protego Press, we hosted a debate about possible rules that might be considered as part of a regulatory approach to stopping the spread of misinformation on social platforms. Justin Hendrix and Bryan Jones looked to a discarded FCC rule, known as the Personal Attack Rule, for inspiration, proposing that when disinformation tactics are used to target a public figure it should prompt the opportunity for redress, including opening a channel to share a corrective message to users exposed to the original content that prompted the offense. “ In an age in which technology makes it easy to manipulate media and instantly reach billions, perhaps we need to look for the wisdom in the old rules,” they argued.

Not so fast, said Ellen Goodman, a professor at Rutgers Law School where she co-directs and co-founded the Rutgers Institute for Information Policy & Law (RIIPL). In a rebuttal for Protego Press, Goodman argued that “the idea that we should be looking to media law principles and values in regulating the digital platforms is right.” But the rule never worked well, it is unclear who could enforce such a rule now, and importantly “in part because it was impossible to predict when an attack might qualify as a “Personal Attack” under the rule, the existence of the rule was chilling of editorial independence and First Amendment protected speech.” Faster, better labeling and more attention by the platforms would work better than reviving this old rule, she argues.

In the end, America should be as innovative and energetic about generating new ideas on how to reduce toxicity and disinformation and appropriately regulate social media as it was about developing these technologies and platforms in the first place. We’ll need more debate- much more- going forward. That brings us to our final piece for this weekly update- a proposal from Renée DiResta on what to do about disinformation. DiResta summarizes where there is consensus on what to do next, and argues the time to act is now. “We can’t afford to spend another year establishing that there’s a problem. It’s time to come together to implement potent prescriptions to inoculate society against disinformation and build resilience.”